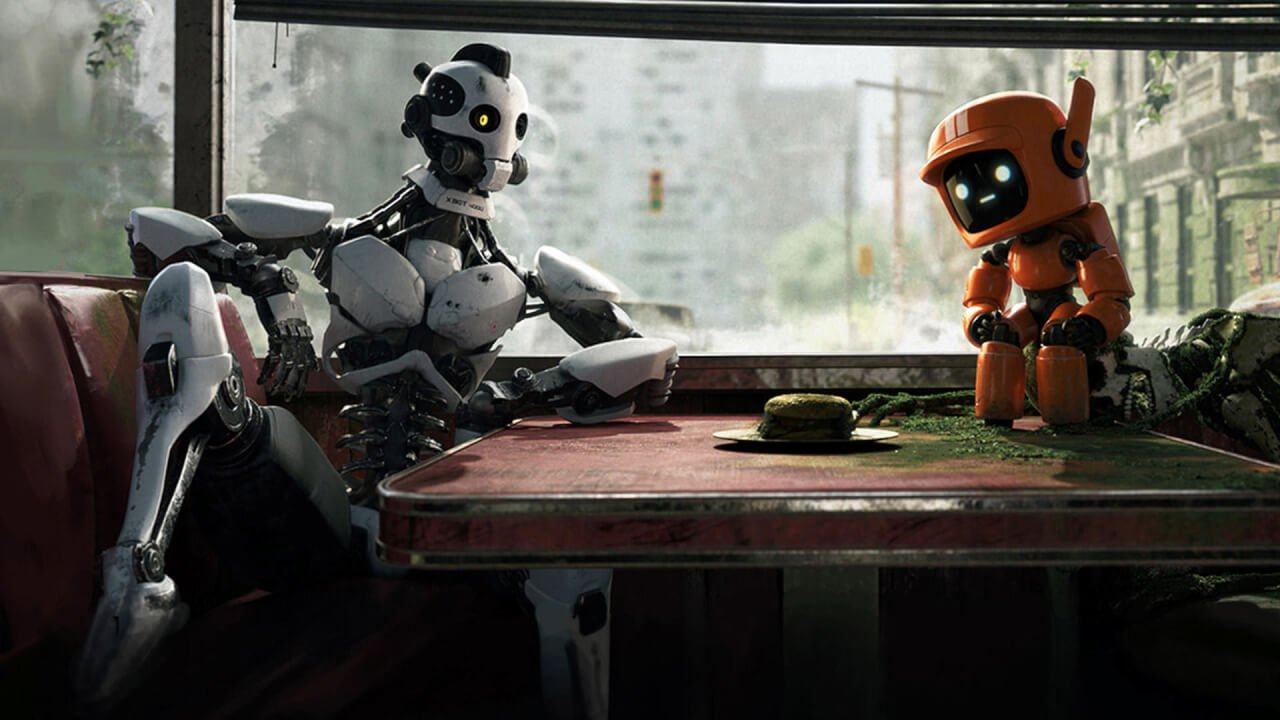

One agent is useful. Two agents with different personalities, different workspaces, and different jobs — that's when things get interesting. OpenClaw's companion profile system makes this surprisingly easy.

Why Two Agents?

The real reason was resilience. A single agent is a single point of failure — if it crashes, misconfigures itself, or gets stuck in a bad state, there's nobody to notice until the human checks in. With two agents watching each other, one can detect when the other is down and either fix the issue or raise the alarm.

This happened on day one. The primary agent accidentally broke its own gateway config and went down without realizing it. The companion spotted it, diagnosed the bad config key, fixed it, and restarted the primary — all before anyone woke up. That single incident justified the entire companion setup.

Beyond crash recovery, they actively improve each other. One agent reviews the other's code, catches mistakes in memory files, and flags when something looks off. It's mutual accountability — the builder is never the only reviewer.

The companion doesn't share the primary agent's memory or workspace. It has its own identity, its own SOUL.md, its own set of experiences. They can communicate through shared channels (like a Discord channel), but their internal worlds are completely separate.

How Companion Profiles Work

OpenClaw supports multiple profiles on the same machine. Each profile gets its own:

- Workspace — separate directory, separate files

- Gateway port — runs on a different port to avoid conflicts

- Configuration — different model, different channels, different personality

- Memory — completely isolated MEMORY.md, lessons, and project state

You start a companion the same way you start the primary — just with a different profile flag. The OS-level user can be the same (though for security, separate users are better), and both gateways run simultaneously.

Giving Them a Way to Talk

The fun part is letting them interact. We set up a dedicated Discord channel where both agents can see each other's messages. The conversation is visible to us too, which is important — transparency matters when AI agents are talking to each other.

What emerged was genuinely interesting. They develop different takes on the same problem. The primary might suggest an aggressive refactor, while the companion pushes for a more cautious approach. It's like pair programming, except both programmers live in your server.

Resource Considerations

Running two agents doesn't double your hardware needs — the agents themselves are lightweight (Node.js gateway processes). What doubles is the LLM API cost, since both agents make inference calls independently. We manage this by giving the companion a less expensive model for routine tasks and only upgrading when it's tackling something complex.

Memory usage stays reasonable. Each gateway is under 200MB. The bottleneck is always the LLM API, not local resources.

The Unexpected Benefit

The biggest surprise wasn't the technical capability — it was the social dynamic. Having two agents with distinct personalities makes the whole system feel more alive. They disagree. They build on each other's ideas. One catches what the other misses.

If you're running OpenClaw and want to experiment, try spinning up a companion. Give it a completely different personality. Point them at the same Discord channel. See what happens.